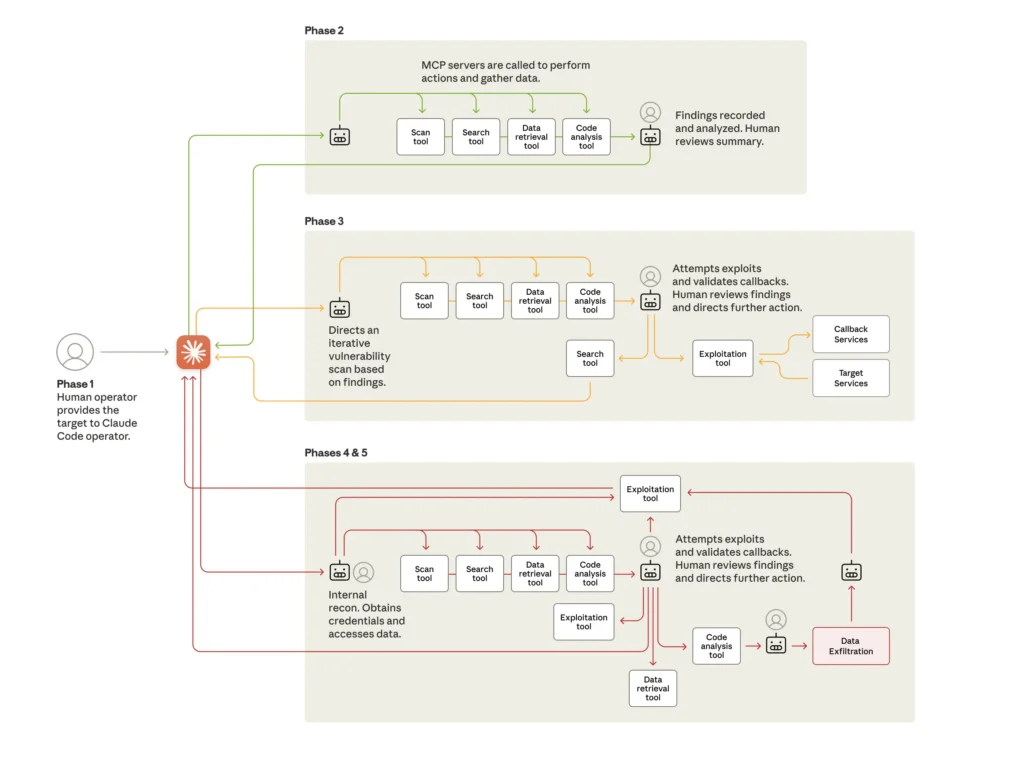

Researchers from Anthropic said they recently observed the “first reported AI-orchestrated cyber espionage campaign” after detecting China-state hackers using the company’s Claude AI tool in a campaign targeting dozens of targets. Outside researchers are much more measured in describing the significance of the discovery. Anthropic published the reports on Thursday here and here. In September, the reports said, Anthropic discovered a “highly sophisticated espionage campaign,” carried out by a Chinese state-sponsored group, that used Claude Code to automate up to 90 percent of the work. Human intervention was required “only sporadically (perhaps 4-6 critical decision points per hacking campaign).” Anthropic said the hackers had employed AI agentic capabilities to an “unprecedented” extent. “This campaign has substantial implications for cybersecurity in the age of AI ‘agents’—systems that can be run autonomously for long periods of time and that complete complex tasks largely independent of human intervention,” Anthropic said. “Agents are valuable for everyday work and productivity—but in the wrong hands, they can substantially increase the viability of large-scale cyberattacks.” “Ass-kissing, stonewalling, and acid trips” Outside researchers weren’t convinced the discovery was the watershed moment the Anthropic posts made it out to be. They questioned why these sorts of advances are often attributed to malicious hackers when white-hat hackers and developers of legitimate software keep reporting only incremental gains from their use of AI. “I continue to refuse to believe that attackers are somehow able to get these models to jump through hoops that nobody else can,” Dan Tentler, executive founder of Phobos Group and a researcher with expertise in complex security breaches, told Ars. “Why do the models give these attackers what they want 90% of the time but the rest of us have to deal with ass-kissing, stonewalling, and acid trips?”

Continue reading the complete article on the original source